Augmented Reality Technology-Enabled reMote Integrated Surgery (ARTEMIS)

ARTEMIS overview sketch

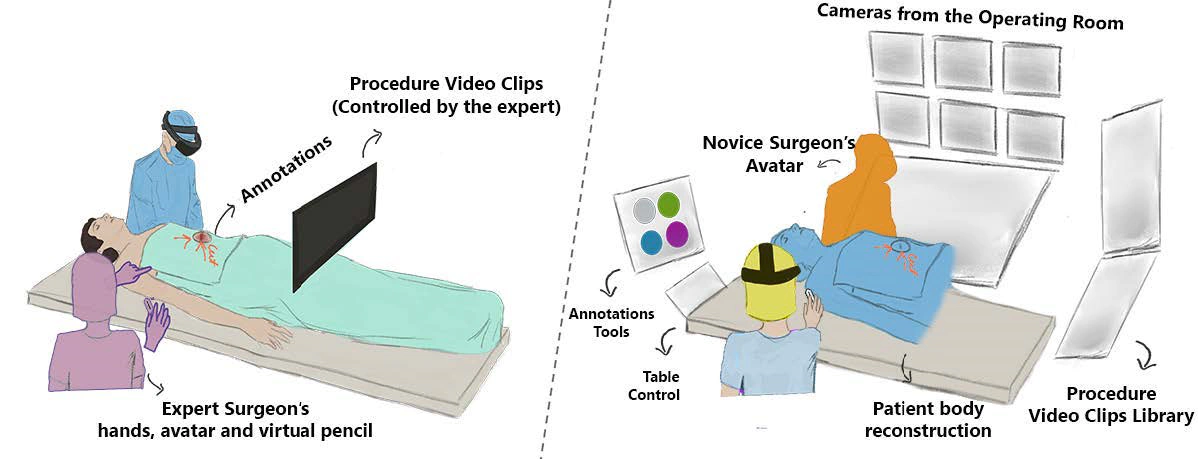

ARTEMIS overview sketchArtistic rendering of ARTEMIS and its features. Left: a Novice Surgeon in Augmented Reality receiving help from a remote expert. Right: a Remote Expert Surgeon in VR interacting with a 3D point-cloud of the patient, and engaging with the novice on a surgical procedure.

Introduction

Specialized surgeries are often needed in places where there is no access to surgeons, and timely access to telemedicine helps novice surgeons save lives.

While modern telesurgery is limited to just audio/video, ARTEMIS enables skilled surgeons and novices to share the same virtual space.

In ARTEMIS, expert surgeons in remote sites use Virtual Reality to access a 3D reconstruction of a patient’s body, and instruct novice surgeons on complex procedures as if they were together in the operating room. Novice surgeons in the field can focus on saving the patient’s live while being guided through an intuitive Augmented Reality interface.

Personal Contribution and Team

ARTEMIS is the result of a collaboration between my adviser’s lab (Ubicomp Lab), the ARC Lab, and San Diego’s Naval Medical Center.

I have been involved in this project from its very early stages. I have worn multiple hats: writing the envisioned architecture for the project (~2017-2018), co-running our role-playing sessions, co-designing the system and its interface, managing the team of developers, leading the development of the networking infrastructure, conducting user studies, and, finally, writing an academic paper that summarizes user goals and the system design. The paper was accepted at CHI2021 (acceptance rate < 20%).

Learn More

I am still setting up my website, but soon enough I will have some detailed articles about:

- ARTEMIS’ design process (for now, check our CHI2021 paper)

- A detailed description of our Headset-OptiTrack calibration as well as ours Kinect-OptiTrack calibration