Vibe-coding by Showing

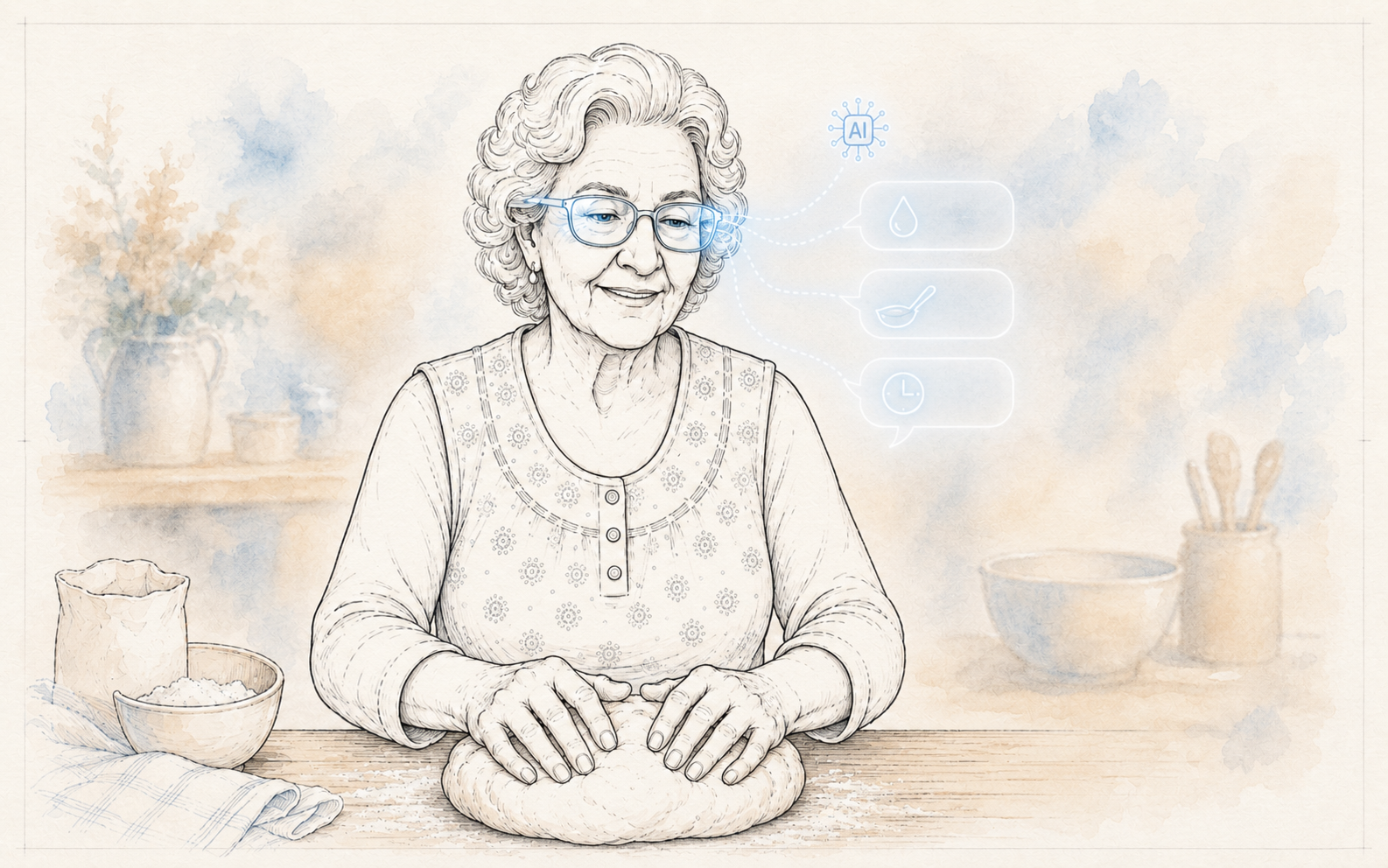

Why my grandma can't vibe-code — and what would let her.

What App Do You Want?

Vibe-coding* is the new way to make software. Anyone can describe what they want, the model writes the code, and they keep moving without seeing the plumbing.

Push that to the limit and you land on a text box. Replit, Lovable, and others ask "what app do you want?" and generate entire applications from a few sentences. The original programmer is almost out of the loop.

But I think "app" is a limiting abstraction — a leftover from an earlier era of software. It forces the user to think of their problem as an app, and to describe, in writing, how the thing should work. That isn't always possible.

My Grandma's Bread Recipe

If my grandma wants to teach the world her 100-year-old bread recipe, the last thing she will google is a way to make an app.

Yet she doesn't want to write the recipe in a notebook. She already has that. She wants something more interactive. She wants to teach everyone the nuanced decisions she makes at each step. She wants to describe the mistakes she has made over the years, and how to recover from them.

All of that is hard to put on paper. It needs a moment, a set of conditions, items on the table, both to evoke her memory and to let her articulate what she does.

Her real recipe is not written on paper. It is tacit knowledge: it lives in the moment.

Right now, the only way she can pass on her craft is by teaching one person, over and over, until they've been through everything that can go wrong. Her struggle is shared by artisans, electricians, senior nurses training new hires on the floor, and anyone whose skill lives in their hands rather than on a screen. None of them want to make an app. They want to teach the way they already teach, but reach more people without being in the room.

The Apprentice

What my grandma needs is an apprentice. Something that learns the way an apprentice would — by being there. Asking why she is adding water. Watching her hands. Listening for the dough. Reading the temperature in the kitchen. Then carrying what it picked up to anyone else, anywhere, without my grandma in the room.

From the Learner's Side

Teaching has always forced a choice. Books and video reach millions, but they can't see that you misread the dough, that you used a different pan, that the humidity is different today. One-on-one teaching adapts — my grandma watches the dough, sees it's too dry, tells me to add water — but only one learner at a time, only while she is in the room. Every medium picks a side: either the learner does all the adapting, or the format doesn't scale.

AI today† can bridge the two — learning from the expert, then adapting the teaching to the apprentice.

The Hard Thing Is Getting the Data

Not the AI. Not the software. Both are "easy" now. What's hard is getting the data — earning your way into the work itself, capturing what experts know without getting in their way. If all we want is for people to vibe-code, I want my grandma to vibe-code too — by showing. Who executes that "code" might be a human following AI guidance, or it might be a robot. It doesn't matter. Grandma vibe-coded the dough.

Footnotes

* "Vibe-coding" entered the lexicon in early 2025: generating software by describing what you want in natural language, and trusting whatever the model produces. I'm using the term loosely here to mean any creation by description, not just code. ↑

† Specifically, multimodal large language models — systems that can take in language, images, and audio in real time and produce a response that fits the situation. ↑