ARTEMIS: Real-Time Surgical Guidance in Mixed Reality

Augmented Reality Technology-Enabled reMote Integrated Surgery. A mixed-reality system that puts a remote expert surgeon and a novice in the same virtual space during live procedures. Deployed at Naval Medical Center San Diego.

The Problem

Trauma surgery often requires procedures that a local surgeon may not have the experience to perform alone. In those cases, they rely on remote guidance from an expert — telementoring. But today's telementoring is a flat video call. The expert watches a 2D feed of the operating field and tries to sketch annotations over it. They have to map actions they would normally express through gestures and physical demonstrations into a 2D interface. The novice — already overwhelmed by an unfamiliar procedure — has to glance at a nearby screen, translate those flat instructions, and map them back onto the patient's body. Every translation step is a place where errors enter.

I started working on ARTEMIS in 2017. The idea was that spatial computing could remove these translation steps. An expert surgeon should be able to see the patient in 3D, point at anatomy, draw incision lines, and demonstrate tool handling — and the novice should see all of that directly on the patient, without looking away.

Designing With Surgeons

Before writing code, we needed to understand how expert surgeons actually mentor novices. We set up a mock operating room and invited seven domain experts — four surgeons and three OR technology specialists, including US Navy surgeons experienced in battlefield care — to participate in role-playing sessions.

Each session had two stages. First, we asked an expert to walk through an emergency procedure -- a needle decompression, a leg fasciotomy, or a cricothyrotomy, all while enacting it on a mannequin. This let us observe how mentors make sense of what they do: how they make decisions, how they communicate surgical steps, and what they expect from the novice. Then, in the second stage, we had them try existing mixed reality tools and our early prototypes, acting as remote mentors while a designer played the novice.

Surgeons don't just tell novices what to do. They show them. They point to anatomical landmarks. They mark incision paths with skin markers. They demonstrate tool handling by mimicking motions above the patient. As one surgeon put it: “I want to be able to do two things. I want to be able to point and say: ‘this is your septum.’ And then I want to be able to draw a line, like this, and say: ‘make your incision here.’” Surgical expertise is spatial. It lives in gestures, hand positions, and physical demonstrations that don't survive translation to a 2D screen.

Equally important was what surgeons told us about the novice's experience. The novice is already overwhelmed. They're performing an unfamiliar procedure, under pressure, on a real patient. The system shouldn't demand their attention. It should quietly deliver guidance into their field of view. As one expert put it: "The idea of only getting an ON button is what most people would want."

From these sessions we identified four design goals: experts need to (1) watch the procedure from the novice's perspective, (2) show the location of anatomical landmarks, (3) mark the location, length, and depth of incisions, and (4) demonstrate tool use — all without adding cognitive load to the novice.

How ARTEMIS Works

ARTEMIS was the first surgical telementoring system to give expert surgeons an immersive VR operating room. After twelve months of iterative design and development with our clinical collaborators, we built a system where the expert and novice share a 3D space.

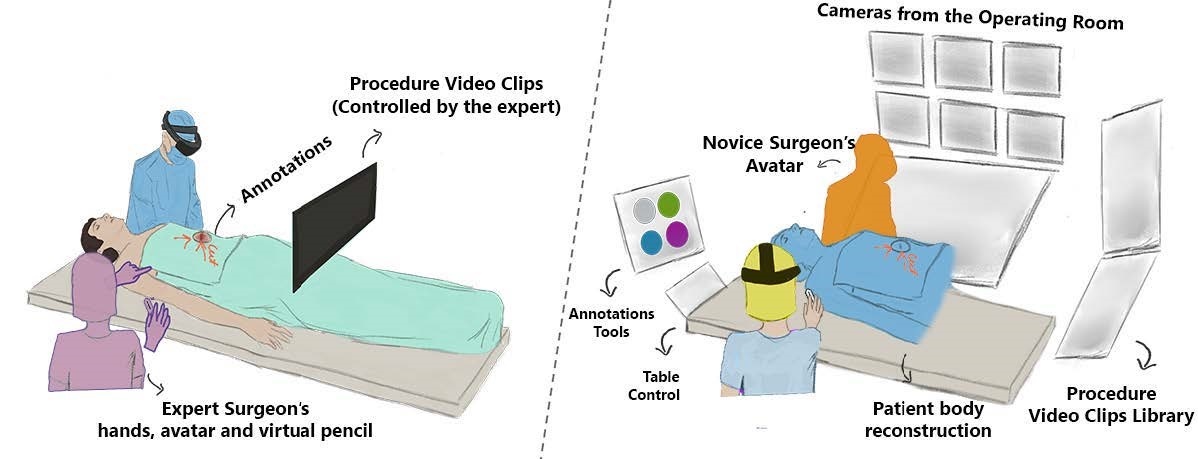

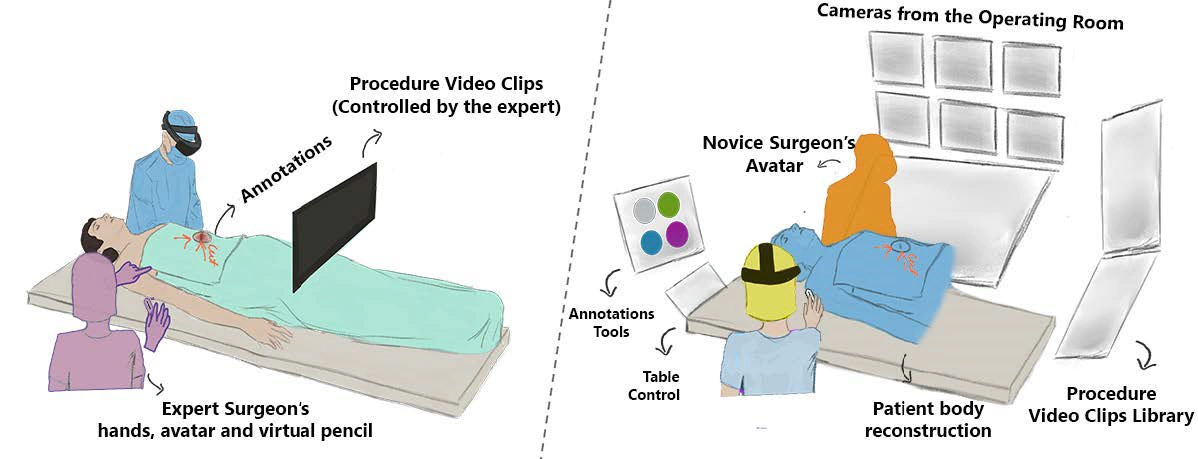

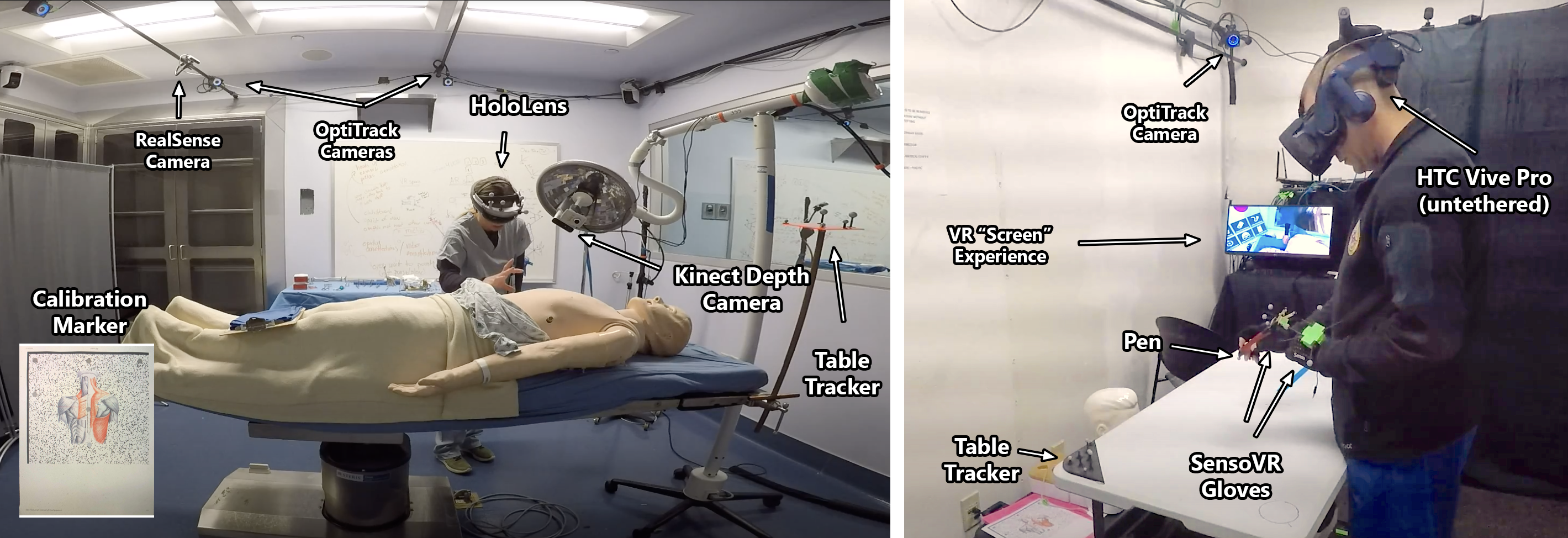

The Expert's World: Virtual Reality

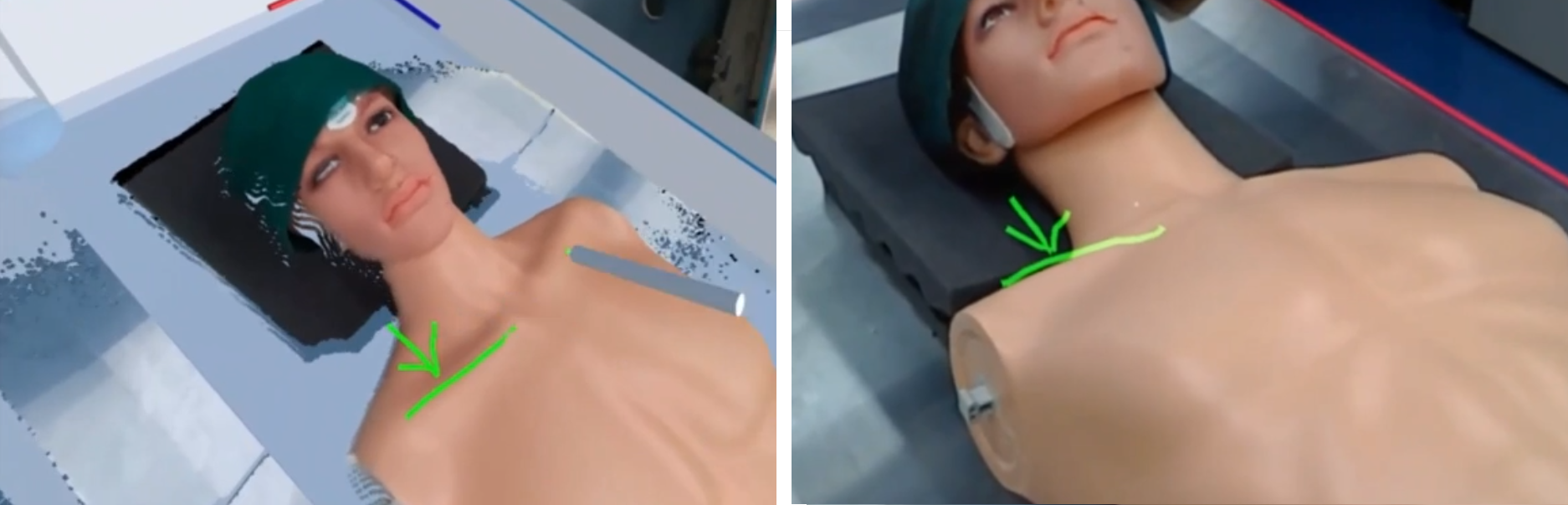

The remote expert puts on a VR headset and enters a virtual operating room. At the center of this room is a real-time 3D point-cloud reconstruction of the patient's body, built from depth cameras in the actual OR. The expert can walk around this reconstruction, lean in close, and see the patient from any angle. Surrounding the point cloud are live camera feeds from the OR, the novice's first-person view, and a control panel for managing the session.

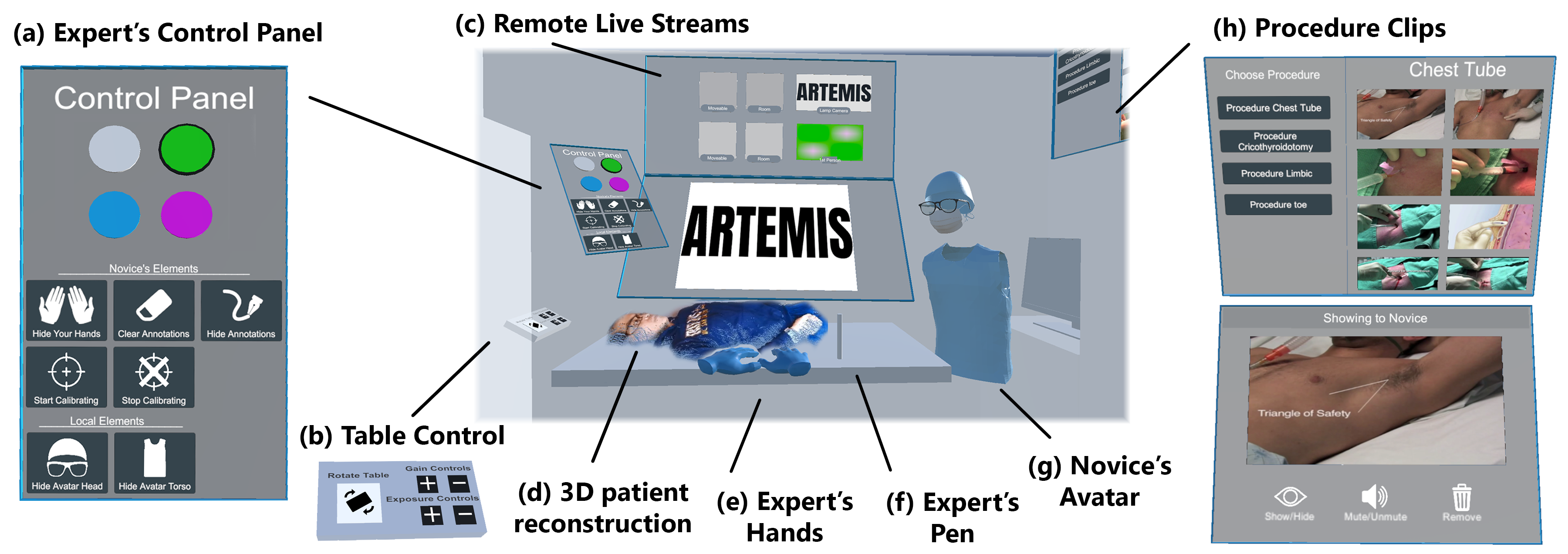

Instead of VR controllers, the expert wears gloves —just like surgical gloves —and holds a physical pen. No buttons to learn, no abstract mappings. They point at anatomy, draw 3D annotations directly on the patient's body, and demonstrate tool handling with their actual hands. Everything they do shows up in real time on the novice's side.

The Novice's World: Augmented Reality

The novice surgeon wears an AR headset and focuses entirely on the patient. By design, the novice cannot directly interact with the interface; the expert controls everything remotely. This was a deliberate decision from our role-playing sessions: a novice performing an unfamiliar procedure should not be burdened with managing a mixed reality interface.

The novice sees the expert's hands and avatar in the room as if the expert were standing there. 3D annotations — incision lines, landmark markers — appear directly on the patient's body. If the expert walks to the same position as the novice, their hands become a second pair of hands guiding the procedure from the same point of view.

Putting It to the Test

We deployed ARTEMIS at Naval Medical Center San Diego. Five expert surgeons (Senior Critical Care Intensivists and Staff Surgeons) mentored six novices (Surgical Technicians, Medics, and Junior Surgical Residents) through 22 procedures on mannequins and cadavers, including cricothyroidotomy, leg fasciotomy, femoral artery exposure, and resuscitative thoracotomy.

Novices completed procedures they had never performed before, guided entirely by a remote expert through ARTEMIS. One expert, watching his novice complete five procedures in 40 minutes — two of which the novice had never done — said: “To do those 5 procedures in 40 minutes, especially 2 of which he's never done before... is pretty great.”

The 1cm Error

In ARTEMIS, novice surgeons follow virtual lines to make incisions. If those lines are off by a couple of centimeters, they could lead a novice to cut at the wrong location. Despite our best technical efforts, the AR interface was often off by about one centimeter.

But in practice, expert-novice pairs could still follow annotations correctly. Mentors drew additional lines to add context, or verbalized instructions to guide novices around misalignment. When an expert asked a novice whether the 3D annotations were helpful despite their spatial imprecision, she replied: “Yeah, definitely. It's pretty neat actually.” Another pair discovered that by standing over the body from the same point of view, they could naturally compensate for alignment issues. Verbal cues, gestures, and shared context patched what the technology could not.

We had already ruled out tracking as the cause. So what was producing a consistent 1cm registration error? That question led directly to my next project: building a simulator to isolate the factors inside head-mounted displays that cause registration errors even when tracking is perfect.

Demo Video

Publications

Gasques, D., Johnson, J., Sharkey, T., Feng, Y., Wang, R., Xu, Z. R., Zavala, E., Zhang, Y., Xie, W., Zhang, X., Davis, K., Yip, M., & Weibel, N. (2021). ARTEMIS: A collaborative mixed-reality system for immersive surgical telementoring. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems.

Tadlock, M. D., Olson, E. J., Gasques, D., Champagne, R., Krzyzaniak, M. J., Belverud, S. A., ... & Davis, K. (2022). Mixed reality surgical mentoring of combat casualty care related procedures in a perfused cadaver model: Initial results of a randomized feasibility study. Surgery, 172(5), 1337–1345.

Weibel, N., Gasques, D., Johnson, J., Sharkey, T., Xu, Z. R., Zhang, X., Zavala, E., Yip, M., & Davis, K. (2020). Collaborative mixed-reality system for immersive surgical telementoring. CHI EA 2020.

"I want to be a surgeon!" Role playing for remote surgery in mixed reality. CHI 2019 Workshop, Glasgow.

Technical review. MHSRS 2019, Orlando.

Patent

US12482192B2 — Collaborative mixed-reality system for immersive surgical telementoring. The Regents of the University of California.

Team

Danilo Gasques, Ph.D., Janet Johnson, Tommy Sharkey, Enrique Zavala, Xinming Zhang, Zhuoqun Robin Xu, Yuanyuan Feng, Ru Wang, Yifei Zhang, Wanze Xie, Konrad Davis, M.D., Michael Yip, Ph.D., Nadir Weibel, Ph.D.