Augment, Instruct, Execute, and Verify

We designed a four-step AR workflow that turns an iPad and a 3D-printed wound model into an interactive training system — no headset, no expert, no expensive simulation lab required.

The Problem

Chronic wounds — pressure ulcers, diabetic ulcers, venous ulcers — cost the US healthcare system between $28 billion and $97 billion a year. Nurses do most of the hands-on care. But wound care training in nursing school is thin. One nurse we interviewed called it “rudimentary” with “very minimal exposure to actual wounds.” On the job, learning is opportunistic: there is no rotation for wound care, so you pick it up by shadowing a more experienced nurse who happens to be treating a wound patient. If a specialist is available, they rarely have time to go deep with every nurse on the floor. A physician assistant summed it up: “Most of the work is pushed off to the front-line staff, but they don't have the right training. Learn to swim or drown.”

Continuing Medical Education (CME) workshops try to solve the access problem. They give nurses structured time to practice outside of patient care — rounds, refresher courses, hands-on sessions led by wound specialists. But the training materials inside those workshops are limited. Standard wound care models are static plastic molds — one wound type, one stage, permanently fixed. A real chronic wound may present cellulitis, necrosis, exudate, bleeding, tunneling, or exposed bone, and it changes week to week. No plastic model captures that. Buying enough models to cover the range is expensive, and they still can't show progression.

Method

We wanted to tackle both problems: make training accessible outside of opportunistic bedside learning, and make the training itself dynamic enough to cover real clinical complexity. Following Stanford’s Gift Giving approach to experience-based design, we ran a co-design workshop with wound care clinicians to understand what they actually needed. We interviewed nine clinicians — a plastic surgeon, two primary care physicians, four registered nurses without wound care certification, one certified wound care nurse, and a physician assistant. We also explored whether newer technologies — AR and 3D printing — could close the gap that static models leave open.

Design constraints

We wanted something nurses could use during a CME lunch-and-learn. That framed the solution space: cost-effective, deployable nationwide, and built on technology clinicians already had on hand. Microsoft’s HoloLens was the headline AR device in academia at the time, but a head-mounted display was the wrong answer — expensive, scarce, and unrealistic to ask a room of nurses to strap on between patients. The same constraint ruled out fully robotic simulation mannequins.

Converging on a solution

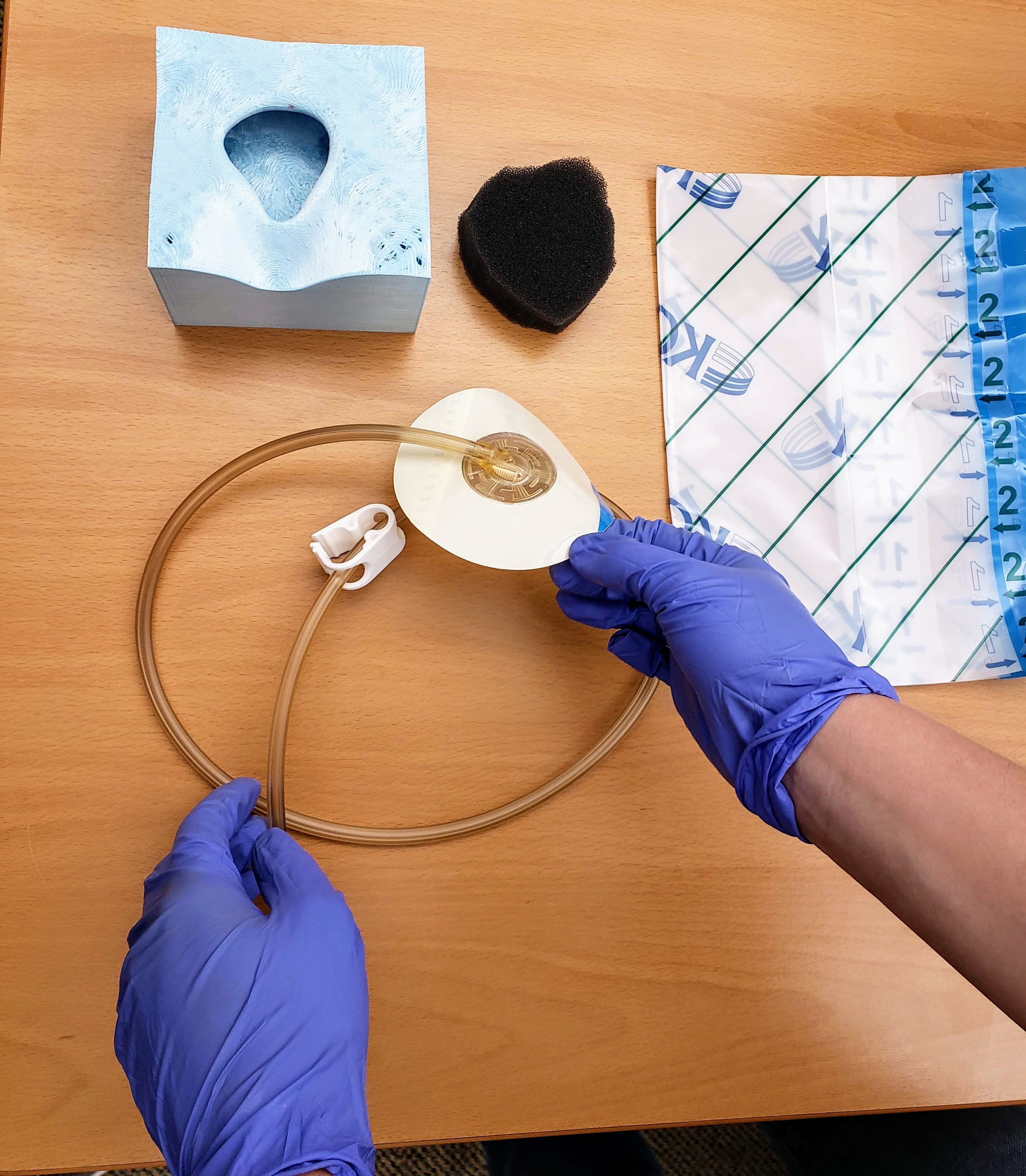

One thread ran through the proposed solutions: use what every clinician already carries, and what most CME programs already have in the room — a phone or a tablet. For the physical side, the workshop landed on 3D printing. Clinicians could scan complex wound cases from rounds and reproduce them on demand for any number of trainees. Scanning and printing was the easy half. The harder half was simulating the procedure steps and checking that the trainee performed them correctly.

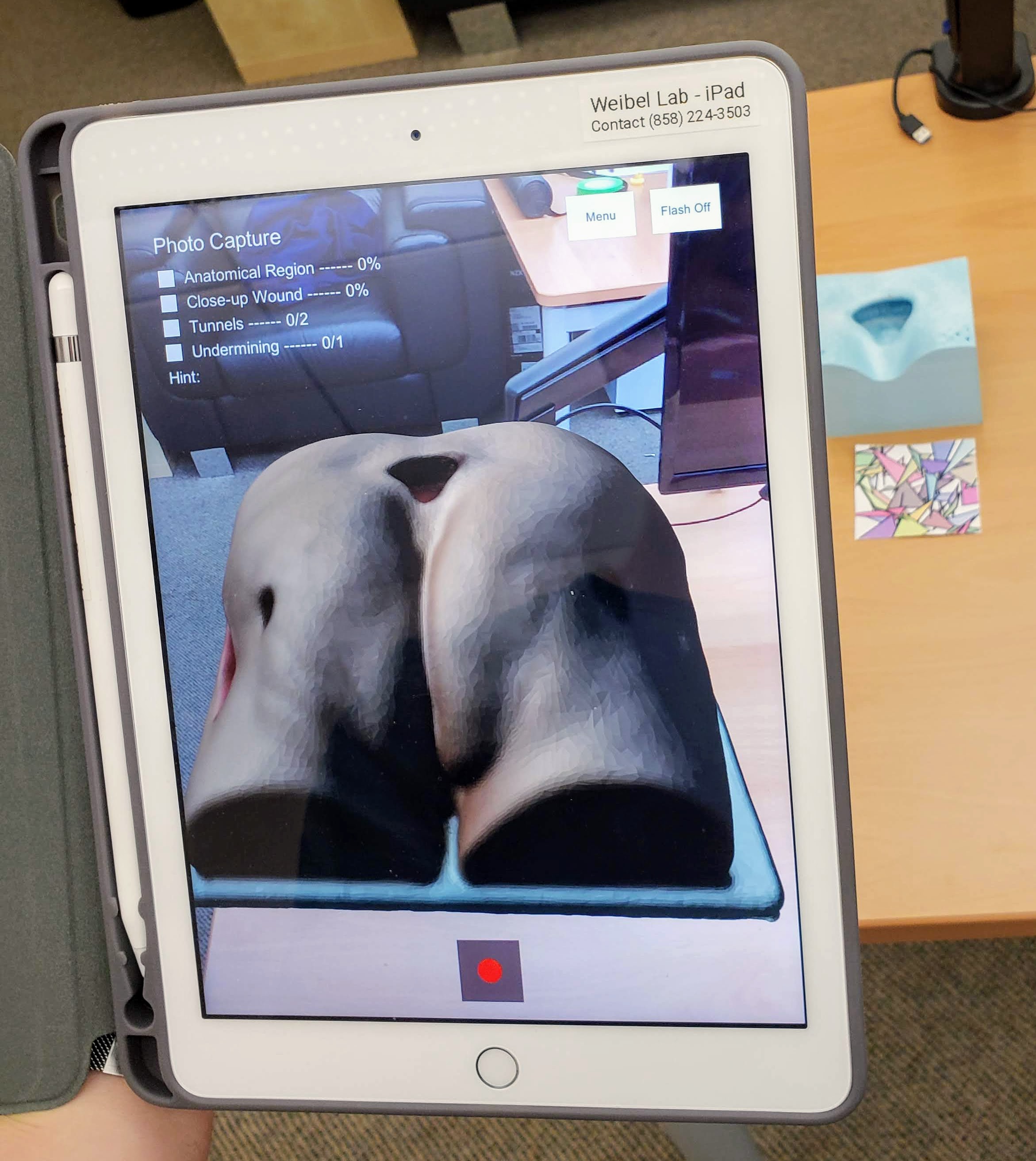

An iPad with ARKit gave us camera-based AR — enough to render bleeding on a printed prop and place step-by-step instructions in situ. But it created a specific design problem. The trainee needs both hands free to apply a dressing, check tubing, or position a wound VAC. You cannot hold a tablet and perform a procedure at the same time. So the question became: how do you guide someone through a physical task on a device they have to put down to do the task?

This is the focus of the workshop paper and this post. Our answer: Augment, Instruct, Execute, Verify.

Augment, Instruct, Execute, Verify

Over a year of interviews and prototyping with wound care experts, we converged on a four-step cycle organized around the moment the tablet has to go down. The system does its work at the bookends — setting the scene and checking the result — and gets out of the way in the middle, when the trainee needs both hands.

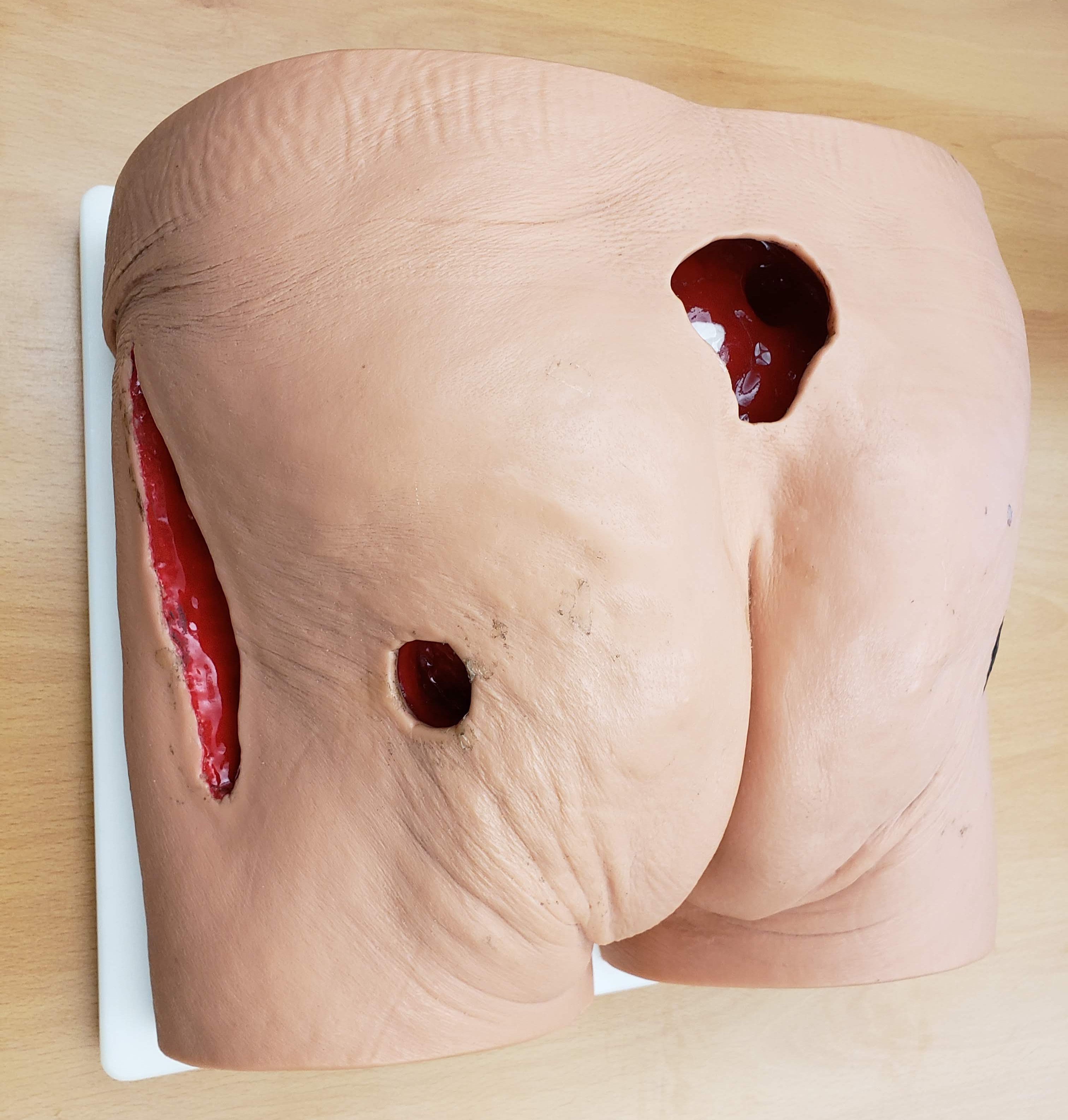

Augment. The trainee points the iPad at a 3D-printed wound block. AR overlays a realistic wound texture onto the physical prop — simulating bleeding, necrosis, or exudate on the same printed block. One 3D print, infinite wound presentations. The AR also renders the surrounding anatomy that the small prop cannot represent, so the trainee sees the wound in the context of the body.

Instruct. With the wound in context, the system overlays step-by-step instructions on the scene. Where to look. What to gather. How to position a dressing. The instructions appear near the area of action, not in a separate manual or on a different screen.

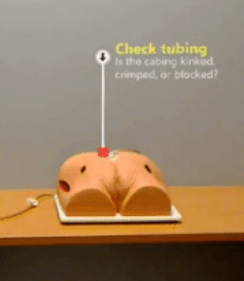

Execute. The trainee puts the tablet down and does the work with both hands. This is the step that separates this from screen-only training. The 3D-printed model gives real haptic feedback — the trainee feels the wound topology, handles the dressing materials, works with the actual tools.

Verify. The trainee picks the tablet back up. The camera and basic computer vision check what was done — were the right dressings gathered, were photos taken from the right angles, was the step completed. Then the cycle repeats for the next step.

Two Prototypes

We built both prototypes in Unity with ARKit, using image tracking to anchor AR content to markers placed near the wound block.

Wound Documentation. Nurses need to photograph wounds from specific angles to capture location, tunneling, and undermining. The system walks them through the process step by step. The Verify component uses the tablet's spatial position to confirm that each photo was taken from the correct angle.

Wound Assessment. The same 3D-printed block with different AR textures — necrotic tissue, bleeding, exudate. The trainee identifies what they see. The system confirms their assessment before the next case. Same physical model, different clinical scenario, every time.

The Bigger Question

This was a workshop paper. A small project. But it made something concrete that I had been circling around for a while.

The four-step cycle — show context, give instructions, let them do it, check their work — worked for wound documentation and wound assessment. It would work for dozens of other physical tasks. The pattern is general. The problem was everything around it. Every instruction had to be manually authored. Every verification step had to be hand-tuned. Every workflow had to be built from scratch by a developer for a single procedure. We spent months building content for two scenarios.

The wound care specialists we worked with knew exactly how to teach these procedures. They could walk a nurse through a wound VAC setup in ten minutes. But they could not create the AR content themselves. That required Unity, C#, ARKit, and weeks of iteration. The expertise lived in the clinicians' heads. The technology required a developer to extract it.

What if the experts could teach the system directly? What if creating a guided workflow were as simple as doing the task once while the system watches? The Augment-Instruct-Execute-Verify cycle would still work. But instead of a developer building each workflow by hand, anyone with domain knowledge could produce one. That was the question I kept coming back to.

Publication

Gasques, D., Liu, W., Broder, K., Chau, D., & Weibel, N. (2019). Augment, Instruct, Execute, and Verify: Augmented Reality Wound Care Training with 3D Printed Props. WISH Workshop, CHI 2019 Conference on Human Factors in Computing Systems, Glasgow, Scotland.

Team

Danilo Gasques, Ph.D., Weichen Liu, Kevin Broder, M.D., Diane Chau, M.D., Nadir Weibel, Ph.D.

Acknowledgments

This work was conducted in collaboration with the VA San Diego Healthcare System and clinicians from the UCSD Geriatrics Workforce Enhancement Program. We thank the nine clinicians who volunteered their time for interviews during the 2018 Annual Wound Care Workshop.