Situated Ultrasound Training in Augmented Reality

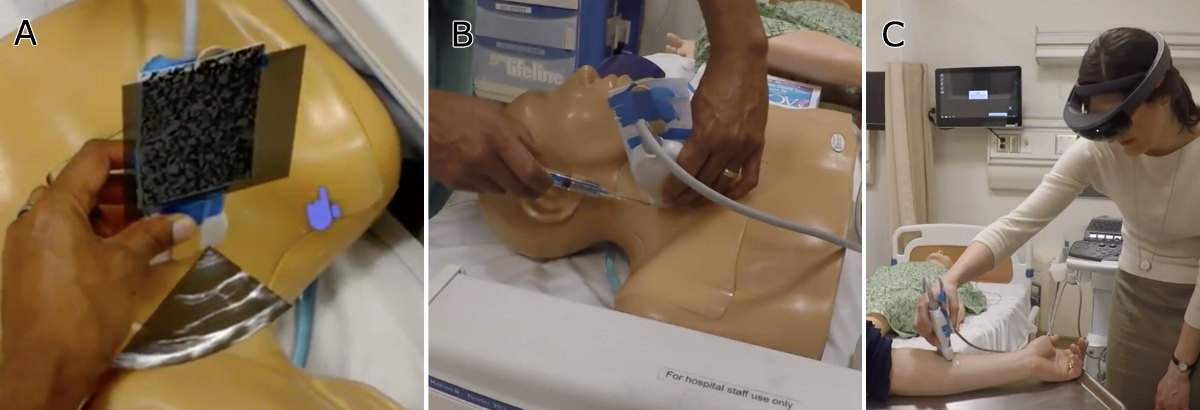

A mixed-reality platform that leverages spatial computing and MagicLeap to create situated, contextualized ultrasound training, providing AR visual aids and performance metrics that transform how students learn ultrasound-guided procedures.

The Problem

Imagine you are a medical student learning to place a central line, a catheter threaded into a large vein near the neck. You hold an ultrasound probe in one hand and a needle in the other. The ultrasound lets you see inside the patient, but that image lives on a monitor several feet away. So you do what every student does: you look down at the patient, then up at the screen, then back down, then up again. Back and forth, over and over, trying to reconcile two completely different views of the same anatomy.

This is the split-attention problem at the heart of ultrasound-guided procedures, and it affects far more than just students. With over 5 million central line placements and 1 million breast biopsies performed annually in the US alone, ultrasound guidance is ubiquitous — and universally difficult. As one expert put it, “Keeping the needle tip in view as the needle is advanced toward the target is much more difficult” than most people realize. The errors that follow can lead to nerve injury, infection, and worse.

The core issue is cognitive, not just physical. Clinicians must perform complex mental transformations to map what they see on a 2D screen to the patient's 3D anatomy. They rotate, translate, and scale the image in their heads, all while steering a needle they cannot directly see. This cognitive burden does not go away with experience; it just becomes more automatic. And when it fails, the consequences are real.

I saw an opportunity to use spatial computing to collapse this attention gap entirely. Instead of forcing students to build a mental bridge between two disconnected views, what if we placed the ultrasound image right where it belongs — on the patient, in the student's line of sight, at real-world scale?

UltraTracker: A Systematic Approach

This work was a synthesis of years of collaborative research between our Computer Science department and the School of Medicine. Guided by participatory design, contextual inquiry, and extensive prototyping with clinicians, we built a platform we called UltraTracker.

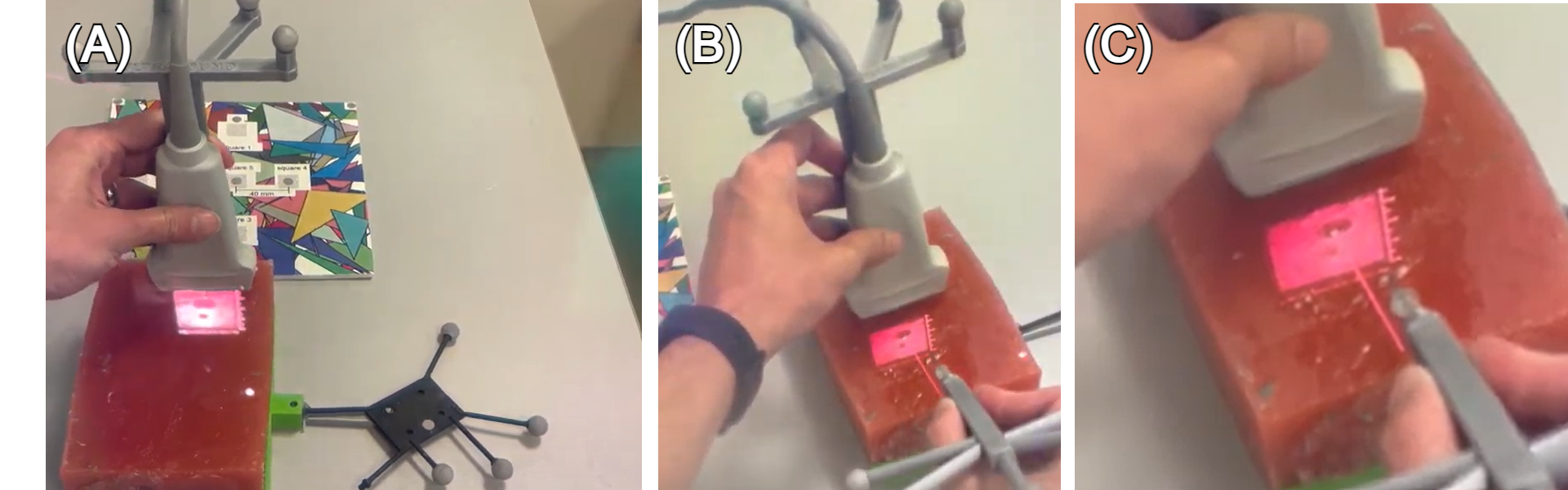

The system combined optical tracking of the ultrasound probe and needle with a mixed-reality headset, creating three distinct training views: a traditional video-based training mode, a screen-based mode with real-time tracking overlays, and a full AR mode where the ultrasound image was spatially situated on the patient. To evaluate which approach actually helped students learn, we designed a randomized controlled study. Students first took a spatial reasoning test (since mental rotation ability is a known confound in ultrasound learning), then completed a pre-test on a gelatin phantom, were randomized into one of the three groups, trained for two hours, and finally took post-tests, all assessed by blinded expert clinicians.

The Situated Ultrasound Breakthrough

In our pilot studies, the reaction was immediate and enthusiastic. Medical students and practitioners championed the situated display because it made ultrasound imaging feel intuitive for the first time. No more mental gymnastics to map screen to patient. But then they tried to guide a needle under the situated image, and the excitement hit a wall. The registration of the AR display was slightly off, and in a domain where you have less than one or two millimeters of tolerance, “slightly off” meant unusable.

This could have been the end of the story. Misaligned AR, unusable for needle guidance, wait for better hardware. But we discovered something more interesting.

The Power of Contextual Cues

After extensive prototyping, we realized that a simple addition could make the misaligned display usable: showing the needle in the same coordinate system as the ultrasound image. Even though both visualizations were offset from physical reality by the same registration error, they were consistent with each other. The needle line on screen corresponded to where the ultrasound showed anatomy. Users could use the virtual needle as a proxy for the real one, guiding it to the target vessel visible in the situated ultrasound, without needing pixel-perfect alignment with the physical world.

This was the key insight: even misaligned AR is useful when combined with proper contextual cues. The fundamental technological improvements in tracking and display calibration need not happen for situated guidance to be beneficial. What matters is giving users the right frame of reference, visual anchors that let them reason about the relationship between virtual information and physical reality, even when the two don't perfectly overlap.

Technical Challenges

Getting to this insight required solving a long list of engineering problems. The convergence-accommodation conflict in head-mounted displays made near-field visualizations difficult to focus on, so we designed virtual focus aids. Dynamic phantom calibration drifted during studies, requiring software that could record, replay, and recalibrate after the fact. Needle tracking was plagued by bending (the needle flexes inside tissue) and wobbly mounting, both of which we had to compensate for in software. Tracking jitter caused false collision events, which we filtered by ignoring consecutive triggers within 100ms windows. And SLAM drift on the headset itself introduced slow, accumulating errors that had to be managed across the duration of each training session.

Each of these was a small battle. Together, they represented the kind of systems work that rarely makes it into papers but determines whether a research prototype can actually be used by real students in real training scenarios.

My Contribution

I led the design, development, and evaluation of the AR visualization pipeline and the spatial registration system that made situated ultrasound possible. This included the optical tracking integration, the contextual cue design that was our key breakthrough, and the study design for evaluating learning outcomes. This work became a central chapter of my PhD dissertation and directly influenced the patent work on enhanced ultrasound systems, as well as my subsequent work at Medivis on ultrasound landmark registration and calibrationless reference markers.

Related Patents

US20200187901A1 — Enhanced ultrasound systems and methods. Suresh, Gasques Rodrigues, Weibel, Anderson. University of California.

US20250391125A1 — Ultrasound landmark registration in an augmented reality environment. Gasques Rodrigues, Choudhry, Morley, Qian, Horowitz. Medivis Inc.

US12396703B1 — Calibrationless reference marker system. Morley, Gasques Rodrigues, Qian. Medivis Inc (granted).

Related Publications

Li, H., Yan, W., Lu, H., Weng, W., Zhao, J., Yang, S., Gasques, D., Qian, L., Ding, H., Zhao, Z., & Wang, G. (2025). Align procedural expertise with virtual information: the key to AR-navigated ultrasound-guided biopsy. Virtual Reality, 29, 103.

Gasques, D., Jain, D., Rick, S., Shangley, L., Suresh, A., & Weibel, N. (2017). Exploring mixed reality in specialized surgical environments. CHI EA 2017.

Institutions

- UC San Diego — Ubicomp Lab, DesignLab