Why Spatial Computing Matters for Healthcare

From cognitive load to cognitive offload, and why imperfect AR might be more useful than we thought.

A surgeon doing a breast biopsy has an ultrasound on one screen, a CT on another, and the patient in front of her. While she holds a needle steady, her job is to fuse all three in her head — rotating, scaling, and translating the 2D images until they line up with the 3D anatomy she can see and feel.

She does this thousands of times a year. Most surgeons do. It works, mostly. When it doesn't, the consequences range from a longer procedure to genuine harm.

I spent my PhD trying to take some of that work out of her head. Three projects, three different problems, all on the same hunch: that the right kind of AR overlay could pull the imagining out of the surgeon and put it in the display. ARTEMIS for remote guidance. HoloCPR for emergency response. Situated ultrasound for training. Each one taught me a different version of the same lesson. I didn't see the pattern until later.

From cognitive load to cognitive offload

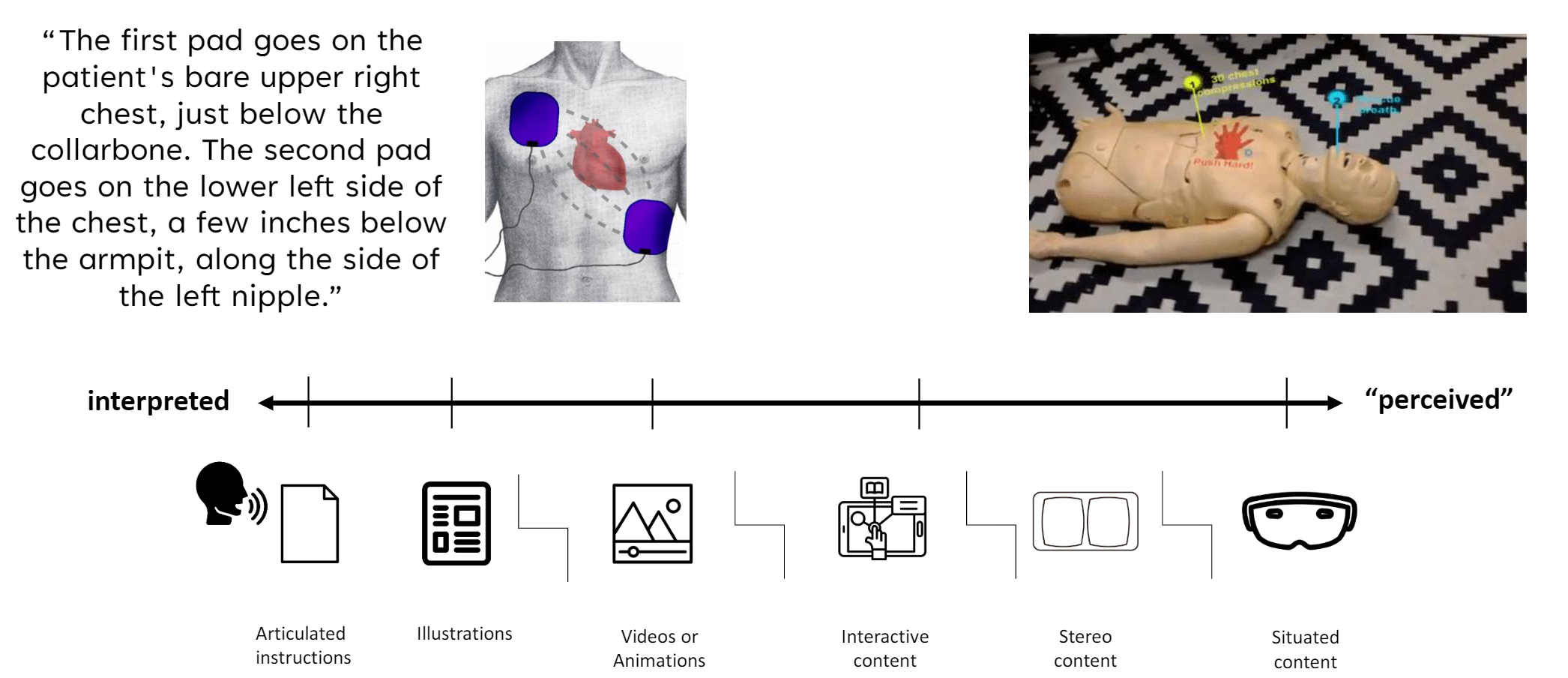

The history of medical guidance is a slow handoff of cognitive work from the user to the medium. Written instructions ask you to imagine every step. Diagrams cut some of that. Video cuts more. AR is the next step: instead of imagining where something should go, you see it sitting there.

I came to call this perception-based guidance. The work of interpreting moves from your head into the display. You don't project a needle path from a 2D ultrasound onto a 3D body — the needle is already there, in your view, rendered into the same space as the anatomy.

This sounds like the end of the story. Build AR, overlay information on reality, problem solved. The rest of my PhD was learning why it isn't.

When the hardware fights you

Every system I built ran into the same wall in different ways.

In HoloCPR, users couldn't find the instructions. The HoloLens's narrow field of view meant the spatial checklist sat outside their visual range and they didn't know to look for it.

In ARTEMIS, registration was good enough most of the time. But when a remote expert annotated a precise point on the patient, even small perceptual errors meant the local surgeon saw the mark a centimeter off.

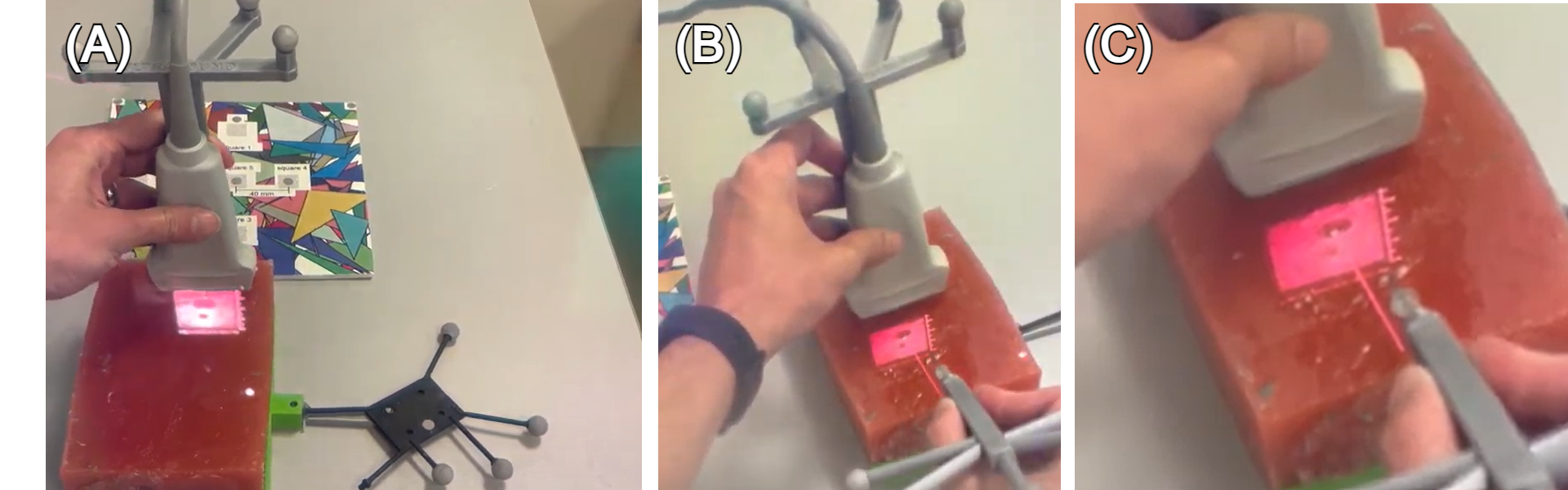

The ultrasound work was where it hurt. Medical students loved seeing the ultrasound image on the patient instead of a monitor across the room — until they tried to guide a needle through it. The display was off by enough to make it useless. In a domain that tolerates a millimeter or two, “close enough” is not close at all.

We measured the limits. The HoloLens 2 tracks both the head and the eyes — just not precisely enough. A 1 mm error in the eye-to-display calibration becomes more than 5 mm by the time the overlay lands on the patient. For needle work, that's not acceptable.

Three kinds of cues

Looking back across the three systems, I noticed something I'd missed while building them. Every time we made one of these systems actually work, we'd added a specific kind of visual aid. And the aids weren't all the same kind. They fell into three categories.

Perceptual cues help you read depth and space: shadows, occlusion, motion parallax, stereo. They mimic how the eye reads the physical world. Most modern rendering engines include them by default.

Interaction cues help you find your way around the AR environment. The HoloCPR compass that always pointed toward the spatial checklist was an interaction cue. It turned “where is the information?” into “follow the arrow.”

Contextual cues were the surprise. They don't carry new clinical information. They give the user a visible anchor — something to compare the AR overlay against, so misalignment becomes legible instead of invisible.

Contextual cues

Take the ultrasound problem again. Students could see the image but couldn't aim through it because the registration was off. We tried adding a virtual needle in the same coordinate system as the situated ultrasound. Both visualizations were offset from physical reality by the same amount. They were wrong, together.

But they agreed. The virtual needle pointed exactly where the ultrasound showed anatomy. A student aiming the virtual needle could hit the target on the ultrasound, even though both were floating an inch off the patient. The display was wrong relative to the world but right relative to itself.

It worked. Students could guide needles using a display that was, by any standard measurement, misaligned.

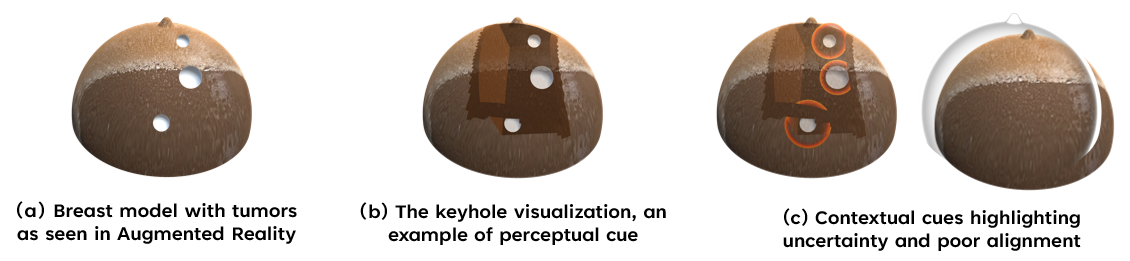

We saw the same effect on a different prototype — an AR-guided breast biopsy where we placed a translucent breast model over the physical phantom. The model didn't tell the surgeon anything she didn't already know. It anchored every other AR overlay to a visible object, so when registration drifted, you could see it drifting against the model and work around it.

Why this matters

The story in medical AR has been that registration accuracy is the gating factor. Build better trackers, calibrate more precisely, wait for the next headset. Better hardware will help, eventually. But this work suggests a parallel path: AR interfaces can improve performance even when alignment isn't perfect, if you design the right visual anchors.

That changes the question. Instead of “how do we get sub-millimeter registration?” you can also ask “what cues help the user work with the millimeters we have?” The first question is a hardware problem on a multi-year horizon. The second is a design problem you can work on tomorrow.

The pattern shows up wherever multiple coordinate systems sit on the same patient: image-guided surgery with CT, MRI, ultrasound, and direct vision, each registered with a different error budget. The systems get useful when they agree with each other, not when they're individually perfect.

None of this was about the technology. The technology stopped mattering a few iterations in. What mattered was making the surgeon's head a less crowded place. Better hardware will help with that, eventually. The cues we can already build help with it now.

“Death by GPS”

Even if one day we get perfect registration, we will still need contextual cues, because they give clinicians a way to check the system's work instead of blindly trusting it. Just as “death by GPS” happens when drivers follow turn-by-turn instructions without looking out the window, a perfectly aligned overlay can still lead to bad decisions if the user has no way to notice when the world and the display start to disagree. Contextual cues anchor guidance to visible structure in the scene, so the user can constantly ask, “Do these overlays make sense here?” and catch errors before they turn into harm.